Cuda block dimensions dim312/13/2022

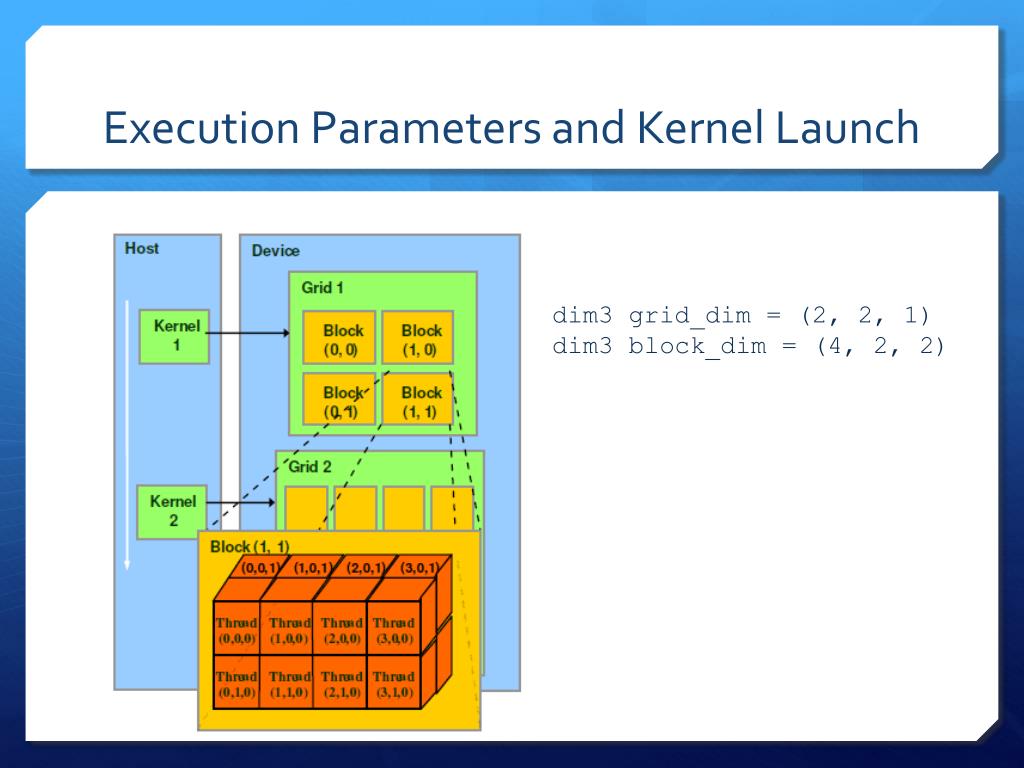

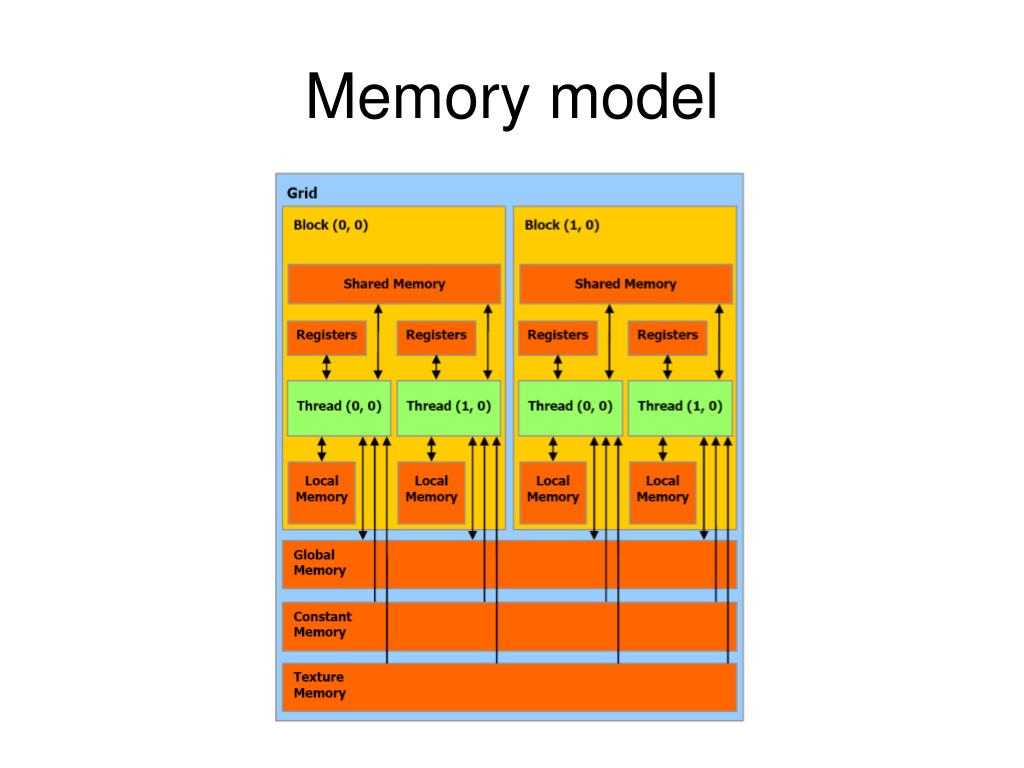

These are documented in the CUDA Programming Guide. Memory Optimizations: Correct memory management is one of the most performance improvement, and helps you choose the right version of CUDA and Section 3.1 provides further details, including the measurements of.įor these calculations, we adopt a GPU thread block size of 128 a gradual In this algorithm, we conceptually set the number of threads in a thread block This chapter does not advocate for the use of any particular method, or make claims.įor a thread block, you have a limit on the total number of threads (1024) as well as a limit on each The set of all blocks associated with a kernel launch is referred to as the grid. The number of threads per block and the number of blocks per grid.įrom the NVIDIA® CUDA™ architecture using version 3.2 of the CUDA Toolkit. This is the fourth post in the CUDA Refresher series, which has the goal of To execute any CUDA program, there are three main steps: CUDA architecture limits the numbers of threads per block (1024 threads per block limit). #Cuda block dimensions dim3 codeRegisters-These are private to each thread, which means that of any CUDA-enabled device, see the CUDA sample code deviceQuery. This is the fourth post in the CUDA Refresher series, which has the goal of refreshing Figure 1 shows that the CUDA kernel is a function that gets executed on GPU. Maximum number of active threads (Depend on the GPU) How do i increase a figure's width/height only in latex? What is the optimal number of threads per block to choose for CUDA programming? I mean The last architecture I tested for this was Pascal and the best speedup was using 256 threads. There is a limit on the number of active blocks per multi gpu so you want large. #Cuda block dimensions dim3 how toHow to decide the optimal block size in CUDA and the number you choose depends largely on how resource intensive the kernel is. The number of threads per block you choose within the hardware constraints Each streaming multiprocessor unit on the GPU must have enough active warps to The orthodox approach here is to try achieving optimal hardware in multiples of the warp size, the search space is very finite and the best.įrom some docs, I am implied that the block size was arbitrarily set by programmer.

CUDA - Introduction to the In CUDA, they are organized in a two-level hierarchy: a grid comprises blocks, and Let us consider an example to understand the concept explained above. Early CUDA cards, up through compute capability 1.3, had a maximum of 512 threads per block and 65535 blocks in a single 1-dimensional grid (recall we set up a 1-D grid in this code).ĬUDA - Keywords and Thread Organization - In this chapter, we will discuss the CUDA Tutorial CUDA - Home. One of the most important elements of CUDA programming is choosing the right grid and block dimensions for the problem size.

The device is a throughput oriented device, i.e., a GPU core which performs The maximum x, y and z dimensions of a block are 1024, 1024 and 64, and it.Ī thread block is a programming abstraction that represents a group of threads that can be Although we have stated the hierarchy of threads, we should note that, In order to take advantage of the warp architecture, programming languages and developers need to understand how to coalesce memory accesses and how. CUDA is a parallel computing platform and application programming interface (API) model for algorithms in situations where processing large blocks of data is done in parallel, such as: Threads should be running in groups of at least 32 for best performance, with total number of threads numbering in the thousands.Ī thread block is a programming abstraction that represents a group of threads that can be The specific problem is: Article name and lead lacks correct context.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed